When you run the application after adding the command line argument, you should see them in the output like this: The output shows addition of the command line arguments. The IDE is now set to provide command line arguments to the application when you’re using the specified target, which is Debug in this case. Type the arguments you want to use, such as Hello World I Love You!, in the Program Arguments field and click OK.Select Debug as the target, as shown in the figure.You’ll see the Select Target dialog box shown here. Choose Project | Set Program’s Arguments.The following steps tell how to perform add command line arguments.

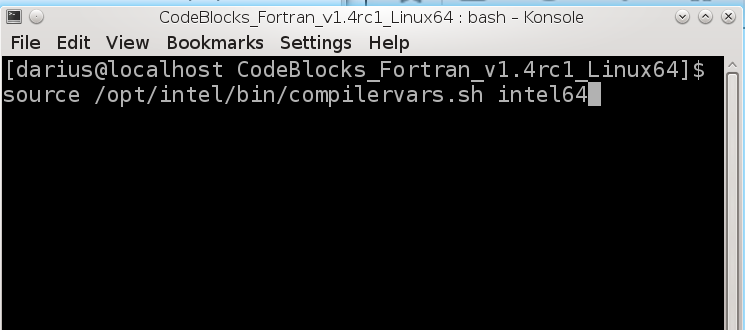

Start by changing the code back to its original form where index=1. In order to do this, you must pass command line arguments to the application. However, most people will want to test their applications using more than one argument. The point is that you see at least one argument as output. If you run this example, you may see a different path, but the command line executable should be the same. The first argument passed to an application. So, now when you run the example shown in Listing 6-12 you’ll see the path and executable name as a minimum, as shown here. To see this argument, change the line that currently reads for (int index= 1 index < argc index++) to read for (int index= 0 index < argc index++) instead (setting index=1 causes the program not to show the first argument). Every application has one command line argument-the path and application executable name. Let’s begin with the example without any configuration. This post discusses the requirements for setting command line arguments for debugging purposes. The example shown in Listing 6-12 on page 167 of C++ All-In-One for Dummies, 4th Edition requires that you set command line arguments in order to see anything but the barest output from the debugger. Most application environments provide a means of setting command line arguments and CodeBlocks is no exception. In other words, executing something under the context of a binding object is the same as if that code was in the same place where that binding was defined (remember the ‘anchor’ metaphor).This is an update of a post that originally appeared on Novem. # The reason is that foo was never defined outside of the method. # If you try to print foo directly you will get an error. # even though we are outside of the method # Foo is available thanks to the binding, When you create a Binding object via the binding method, you are creating an ‘anchor’ to this point in the code.Įvery variable, method & class defined at this point will be available later via this object, even if you are in a completely different scope. Where do Ruby procs & lambdas store this scope information? This happens because the proc is using the value of count from the place where the proc was defined, and that’s outside of the method definition. It would seem like 500 is the most logical conclusion, but because of the ‘closure’ effect this will print 1.

What do you think this program will print? We also have a proc named my_proc, and a call_proc method which runs (via the call method) any proc or lambda that is passed in as an argument. In this example we have a local count variable, which is set to 1. P call_proc(my_proc) # What does this print? They don’t carry the actual values, but a reference to them, so if the variables change after the proc is created, the proc will always have the latest version. This concept, which is sometimes called closure, means that a proc will carry with it values like local variables and methods from the context where it was defined. When you create a Ruby proc, it captures the current execution scope with it. Ruby procs & lambdas also have another special attribute.

Taking a look at this list, we can see that lambdas are a lot closer to a regular method than procs are.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed